- Google's official position is that it rewards helpful content regardless of how it was produced, whether AI or human.

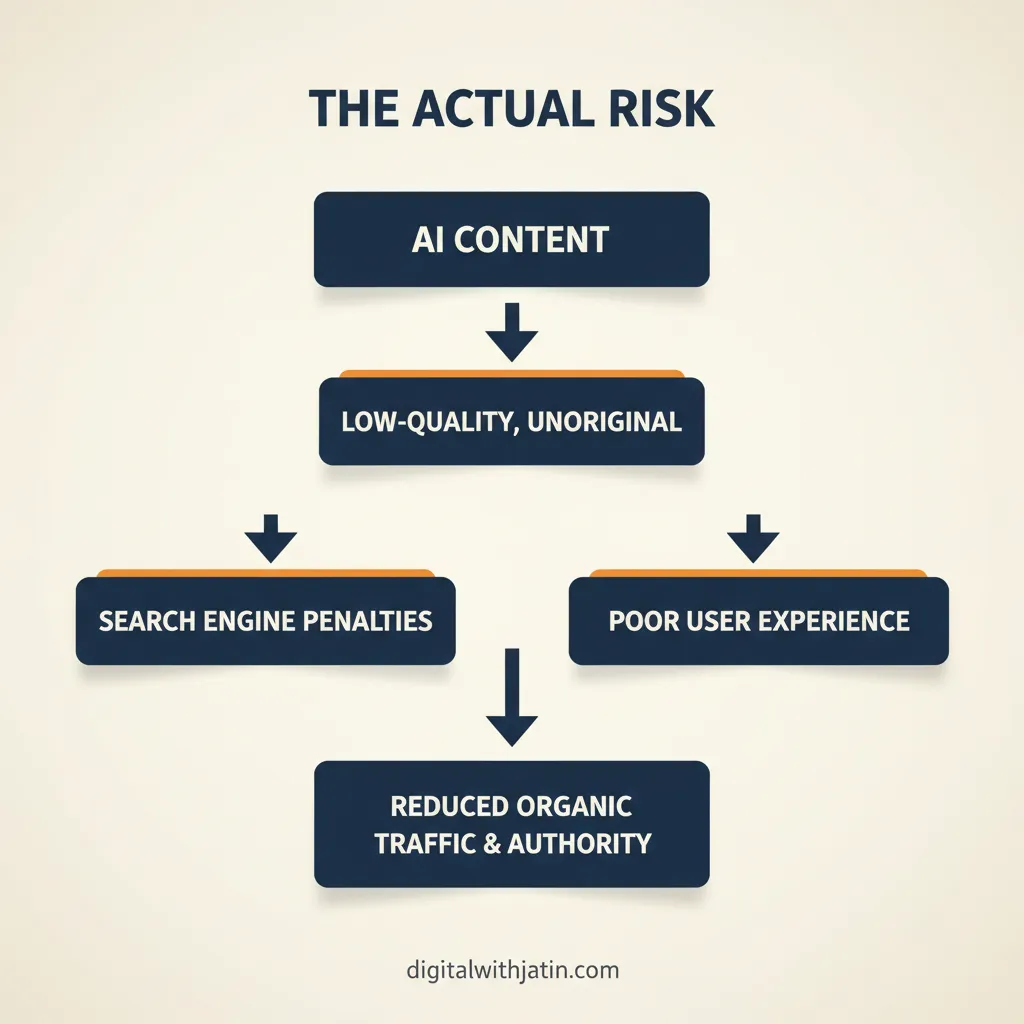

- AI content hurts SEO when it is published without editorial review, contains fabricated data, lacks original insight, or is scaled to manipulate rather than inform.

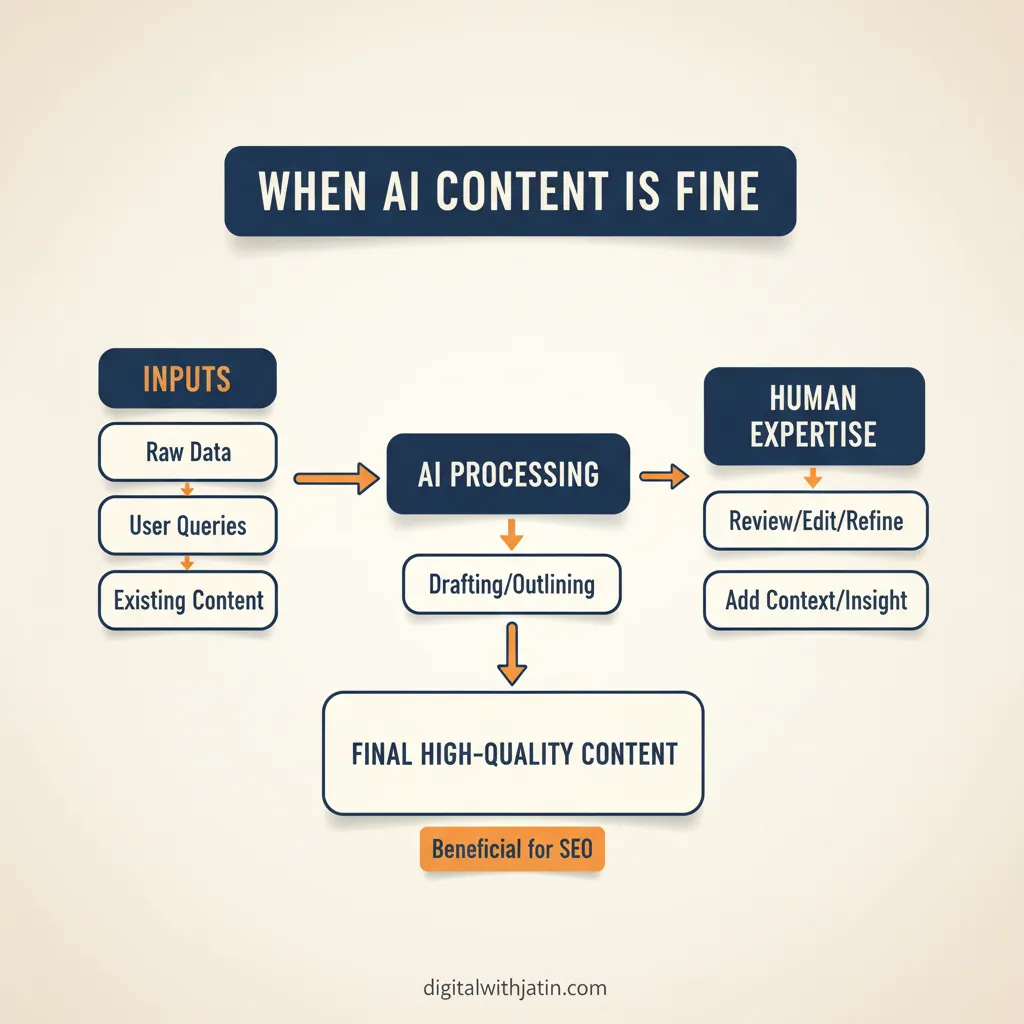

- AI content is fine when it goes through a real editorial process: fact-checking, voice editing, and a human judgment call on whether it serves the reader.

- The pattern that gets sites penalised is mass-produced, unedited AI output, not AI-assisted writing with human oversight.

In mid-2023, a content site producing around 200 AI-generated articles per month saw a significant drop in organic traffic after a Google core update. The site’s owner had been publishing AI drafts with minimal editing, no fact-checking, and no original research. The traffic loss was not random. The pages that fell hardest were the ones with the most generic content, the fewest cited sources, and no first-person experience signals.

That example is not an indictment of AI content. It is an indictment of unedited content. The question “is AI content bad for SEO” has a conditional answer, and the condition is entirely about editorial process.

The direct answer

Direct answer: AI content is not inherently bad for SEO. Google’s position, stated explicitly in its February 2023 Search Central post on AI content, is that it rewards helpful, people-first content regardless of production method. AI content hurts rankings when it is published without editorial review, when it fabricates data, when it lacks original insight, or when it is produced at bulk scale to fill keyword gaps rather than serve readers.

The line is not between AI content and human content. The line is between helpful content and unhelpful content. The standard is defined in the Google helpful content guidance, and it applies to everything.

When AI content is bad for SEO

Pattern 1: No editorial review

Publishing an AI draft without a human pass is the most common failure mode. The draft is grammatically correct, structurally reasonable, and entirely generic. It covers the same ground as every other page on the topic, adds nothing the reader cannot get from the top result, and signals no real experience with the subject.

Google’s quality rater guidelines weight first-hand experience (the first E in E-E-A-T) as a signal of content quality. An AI model has no first-hand experience. A human editor who has done the work being described can add it in one pass. Without that pass, the page has no differentiating layer, and it competes with thousands of equally generic AI pages.

Pattern 2: Fabricated or unverified statistics

AI models hallucinate. They produce confident-sounding statistics with no basis in reality, especially for niche topics where training data is thin. A page full of fabricated numbers does two things: it exposes the site to a reputational hit when readers verify the claims, and it fails the accuracy layer of E-E-A-T.

The fix is not to avoid statistics. The fix is to verify every number against a primary source before publishing and to include an inline citation. A page with five verified, cited statistics outperforms a page with fifteen fabricated ones, every time.

Pattern 3: No original insight

The SEO damage from AI content is concentrated in pages that add nothing beyond what is already indexed. If every page on “is ai content bad for seo” covers the same four points in the same order, a new page covering those same four points in the same order gives Google no reason to rank it above the existing ones.

Original insight means: first-hand observation from working on the problem, proprietary data, a framework that doesn’t exist elsewhere, or a direct disagreement with the consensus that is backed by evidence. AI models cannot produce any of these. A human editor who has spent time on the problem can add at least one in under 30 minutes.

Pattern 4: Bulk production aimed at keyword volume

Sites that produce 50 AI articles a week to cover keyword gaps are running a playbook that Google’s helpful content updates are specifically designed to address. The helpful content guidance asks whether content exists primarily to attract search engine visits rather than to help people. A 50-article-per-week AI pipeline with no editorial oversight answers that question clearly.

Volume is not the problem. A site publishing 50 well-edited, genuinely useful pages a week is doing the right thing. Volume combined with no editorial process is the pattern that earns ranking losses.

When AI content is fine

AI-assisted content with a real editorial process behind it ranks normally. The editorial process has to include:

- Fact-checking every statistic against a primary source

- An editor adding at least one original observation the AI could not produce

- A voice pass to remove generic phrasing and add brand character

- A review of whether the page genuinely answers the search intent better than the current top results

This is not a heavy process. For a 1,500-word post, it adds 30 to 60 minutes to the production time. That 30 to 60 minutes is what separates an AI-assisted page that ranks from one that doesn’t.

For more on the broader question of whether AI content works in SEO, see is AI content good for SEO, which covers the performance data side.

The editorial fix

If you have AI content on your site that is underperforming, the audit process is straightforward:

- Pull the affected pages from Google Search Console. Sort by impressions with low clicks, and by pages that are indexing but not ranking.

- For each page, read it as a searcher. Does it answer the query better than the current top result? If no, that’s the problem.

- Add one layer the AI could not produce: a specific example from your own experience, a verification of the key statistic against a live source, or a direct answer that goes further than the generic consensus.

- Add an editorial QC step to your publishing workflow. Even a simple checklist (verify stats, add one original observation, check voice, confirm it answers the intent) closes most of the gap.

The site from the opening of this post recovered its traffic over six months. The fix was not removing AI content. It was adding an editorial gate that the original workflow had skipped.

For the connection between this and the broader AI SEO picture, the AI SEO overview covers where content quality sits in the full citation and ranking framework.

FAQ

Will Google penalise my site for AI content?

Not for AI content specifically. Google’s stance, stated in its February 2023 Search Central post, is that it rewards content that demonstrates experience, expertise, authoritativeness, and trust, regardless of production method. What Google penalises is content designed to manipulate rankings rather than help readers. That standard applies equally to human and AI-written pages.

Does AI content always rank lower?

No. AI-assisted content with strong editorial oversight, original data, clear expertise signals, and a genuine answer to the search intent ranks normally. The ranking gap appears when AI content is published without fact-checking or editing, producing generic, shallow pages that fail on helpfulness, not on AI origin.

How do I know if my AI content is hurting SEO?

Check Google Search Console for impressions and clicks on AI-assisted pages compared to manually written ones on equivalent topics. If AI pages have high impressions but very low click-through rates, the titles are weak. If they rank but have high bounce rates, the content is not matching search intent. If they don’t index at all, check crawl coverage. The data tells you more than any heuristic about AI detection.

What makes AI content bad for SEO?

Four patterns: no editorial review before publishing, fabricated or unverified statistics, no original insight beyond what is already ranking, and bulk production aimed at keyword volume rather than reader value. Any one of these can undermine a page. Combined, they describe the pattern Google’s helpful content guidance is designed to filter.

How do I fix bad AI content?

Audit the affected pages in Search Console. For pages with impressions but low clicks, rewrite the title and description. For pages ranking but not converting, rewrite the content to match intent more precisely and add original observations. For pages that are not indexing, check crawl coverage first. Then add an editorial QC step to your publishing workflow to prevent the pattern from recurring.

The actual risk

The risk with AI content is not that Google can detect it. The risk is that AI makes it easier to publish content that was never good enough to rank, at a scale that compounds the problem. The editorial process is not a nice-to-have. It is the mechanism that turns an AI draft into a page worth ranking.

If you want to build that editorial layer into a repeatable workflow without it becoming a bottleneck, the AI SEO service covers how I structure it for clients running content at volume.