- llms.txt is a plain-text file at your site root that lists your most authoritative pages for AI crawlers to prioritize.

- No major AI lab officially supports it yet — treat it as a low-cost belt-and-braces move, not a citation guarantee.

- It costs under 10 minutes to publish and zero to maintain; the risk of adding it is near zero.

- The bigger win is making sure GPTBot, ClaudeBot, and PerplexityBot are allowed in robots.txt first.

You have already allowed GPTBot in your robots.txt, added JSON-LD structured data to your pillar pages, and written direct-answer blocks that pass the schema validator. Your indexability checks out clean. Then someone in a GEO forum mentions llms.txt, and you wonder: is there one more file you should be publishing at your site root? That file is llms.txt, and understanding what is llms.txt and why does it matter for seo comes down to a simple idea: it is a curated reading list you publish for AI systems, not a ranking signal you submit to a search engine.

What is llms.txt and why does it matter for SEO?

Direct answer: llms.txt is a plain-text file placed at your site root (e.g.,

yourdomain.com/llms.txt) that lists your most authoritative pages alongside one-line descriptions. Proposed by Answer.AI in late 2024, it acts as a curated shortlist for AI crawlers. No major AI lab officially supports it as of early 2026, but it costs almost nothing to publish and carries no known downside.

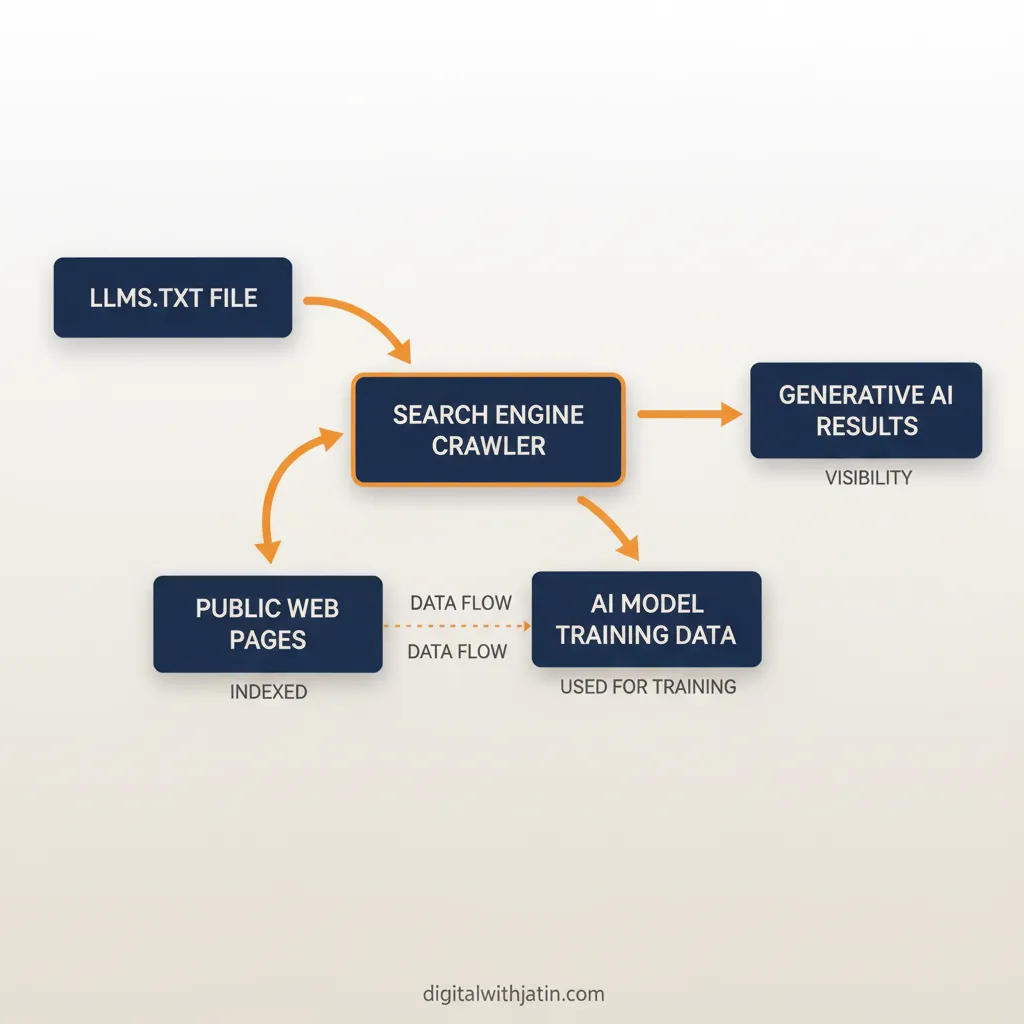

The format borrows the simplicity of robots.txt while borrowing the intent of a sitemap: tell a reading system where your best content lives, so it does not have to guess. Where a sitemap hands a search engine every URL equally, llms.txt hands an AI crawler a prioritized shortlist with plain-English context for each entry. That distinction matters because AI systems often work with limited crawl budget and want to surface the most authoritative passage for a given query.

How llms.txt works

The llms.txt specification is intentionally simple. The file lives at the root of your domain, uses plain text, and follows a loose Markdown-like structure. A hash symbol starts a comment or heading. A greater-than symbol marks a short site description. Grouped links with one-line summaries make up the body.

Here is what a real llms.txt file looks like in practice:

# llms.txt — Digital With Jatin

> AI SEO and GEO consulting by Jatin Lokwani

## Key pages

- [AI SEO Guide](/blog/ai-seo): The complete playbook for earning citations in AI Overviews, ChatGPT, and Perplexity.

- [AI Technical SEO](/blog/what-ai-simplifies-technical-seo-work): What AI automates in technical SEO and what still needs a practitioner.

- [GEO / AEO Services](/services/geo-aeo): Citation engineering for brands with zero AI search presence.The file is not validated by any official body. There is no schema validator for it, no submission portal, and no ping mechanism like IndexNow. You publish it, and any AI crawler that has adopted the specification can read it on its next visit.

The one-line descriptions matter more than the URL list. An AI crawler already knows URLs exist; what it gains from llms.txt is human-curated context about which pages are the definitive ones on each topic. Write descriptions the way you would write a meta description: one specific sentence that captures what the page proves, not what it contains.

You can also publish a companion file at yourdomain.com/llms-full.txt that includes the full text of key pages, rather than just links. That companion file is optional and suited to sites whose content is short enough to embed (documentation hubs, glossaries, FAQ sets).

How llms.txt differs from robots.txt and sitemap.xml

Each of the three files serves a different audience and a different purpose. Conflating them leads to misplaced effort.

| File | Purpose | Audience |

|---|---|---|

| robots.txt | Controls which URLs crawlers may or may not access | All bots (Googlebot, GPTBot, ClaudeBot, etc.) |

| sitemap.xml | Lists all indexable URLs with optional metadata (last modified, priority) | Search engine indexers |

| llms.txt | Curated shortlist of your most authoritative pages with plain-English context | AI large-language-model crawlers (experimental) |

robots.txt is a permission gate: it says “you may not enter here.” Sitemap.xml is an exhaustive inventory: it says “here is everything I publish.” llms.txt is an editorial shortlist: it says “if you only read five pages, read these, and here is why each one matters.”

The files are additive. Publishing llms.txt does not replace the need for a clean sitemap or a correctly configured robots.txt that permits AI crawlers. It sits on top of the other two, not instead of them. A site that blocks GPTBot in robots.txt but publishes a perfect llms.txt gets no benefit: the AI crawler never reaches the file in the first place.

Does llms.txt actually improve AI citations?

Honestly, no one can confirm it does, yet. As of early 2026, neither Google, OpenAI, nor Anthropic has published documentation stating that llms.txt influences citations or AI Overview inclusion. Google’s AI Overviews rely on the existing search index, built through standard Googlebot crawls, not through a separate AI-specific file.

OpenAI’s GPTBot documentation describes how GPTBot works and how to allow or block it via robots.txt. It does not mention llms.txt as an input to ChatGPT’s citation decisions. Anthropic’s guidance on ClaudeBot similarly focuses on robots.txt controls and does not reference llms.txt as a supported signal.

PerplexityBot and other AI crawler user agents have no published llms.txt support statement either.

So why bother? Because the cost of not publishing it is the same as the cost of publishing it: essentially zero. The file takes less than ten minutes to write for a typical site with five to ten pillar pages. If any AI system does adopt the format in the next twelve months, your signal will already be in place. That is the belt-and-braces argument: you do it not because you know it works, but because the downside of skipping it is identical to the downside of doing it wrong, and doing it right costs nothing.

Treat llms.txt as a low-priority hygiene item. It belongs at the bottom of your AI SEO checklist, after confirmed-impact items like robots.txt configuration, structured data, and direct-answer passage optimization.

How to add llms.txt to your site

Publishing llms.txt is a five-step process that most site owners can complete in under ten minutes.

Step 1: Create the file. Open a plain-text editor (not Word or Google Docs, which add invisible formatting). Name the file llms.txt exactly. No spaces, no capital letters.

Step 2: Write the header. The first line should be a comment with your site name, prefixed by a hash. The second line should be a one-sentence site description prefixed by a greater-than symbol.

# llms.txt — Your Site Name

> One sentence describing what your site covers and who it is for.Step 3: List your key pages. Group them under a ## Key pages heading. Each entry is a Markdown-style link followed by a colon and a one-sentence description. Limit the list to your five to ten most authoritative pages. Pillar posts, cornerstone guides, and service pages are the right candidates. Do not list every URL; that is what sitemap.xml is for.

Step 4: Upload to your site root. The file must be accessible at yourdomain.com/llms.txt. For static sites (Astro, Hugo, Jekyll), drop the file into your public/ or static/ directory. For WordPress, place it in the root of your server alongside robots.txt.

Step 5: Verify and optionally link it. Open yourdomain.com/llms.txt in a browser. If the plain text renders correctly, you are done. You can optionally add a <link rel="llms" href="/llms.txt"> tag in your site’s <head>, though no crawler has confirmed it reads this hint.

There is no submission step, no freshness ping, and no monitoring dashboard. Revisit the file when you publish a new pillar page or retire an old one.

What to do before worrying about llms.txt

llms.txt is the last item on this list, not the first. Before you open a text editor, confirm these higher-priority items are in order.

1. Allow AI crawlers in robots.txt. If GPTBot, ClaudeBot, Google-Extended, or PerplexityBot is blocked in your robots.txt, no AI system can read your content regardless of what your llms.txt says. Check your disallow rules first.

2. Fix crawl budget and indexability issues. Redirect chains, noindex tags on pillar pages, and orphaned content all hurt how AI crawlers understand your site structure. A clean sitemap.xml submitted to Google Search Console is still the most reliable way to signal what matters.

3. Add structured data. JSON-LD schema (FAQPage, HowTo, Article) gives AI systems machine-readable signals about your content’s structure. Google’s AI Overviews draw on these signals when deciding which passages to surface. This has confirmed impact; llms.txt does not.

4. Write direct-answer blocks. Passages that open with a clear, one-sentence answer to a question are the content units AI systems most often quote. No file at your root fixes a page that buries its answer in paragraph four.

Once all four items are in order, publish your llms.txt. At that point you have done everything a practitioner can reasonably do with the current state of AI crawler documentation.

FAQ

What is llms.txt?

llms.txt is a plain-text file placed at the root of a website (e.g. yourdomain.com/llms.txt) that lists the site’s most useful pages and a one-line summary of each. The format was proposed by Answer.AI in 2024 as a way for site owners to guide AI large-language-model crawlers toward their best content.

Does llms.txt help with SEO or AI search citations?

There is no confirmed ranking or citation signal from llms.txt as of early 2026. No major AI lab (Google, Anthropic, OpenAI) has officially announced support. It may help AI systems that do adopt it find your best pages faster, but it is not a substitute for structured data, crawlability, and passage-level optimization.

Should I add llms.txt to my site?

Yes, if you can publish it in under 10 minutes. The file is a low-effort signal: list your pillar pages, add one-line descriptions, publish at the root. No AI lab requires it, but none penalizes it either. Prioritize robots.txt AI crawler access and schema markup first. Those have confirmed impact.

How is llms.txt different from robots.txt and sitemap.xml?

robots.txt tells crawlers what they cannot access. sitemap.xml lists all URLs for indexing. llms.txt is a curated shortlist of your most authoritative pages with human-readable context, intended to help LLMs prioritize rather than index everything equally. It is additive, not a replacement for the other two.

Back to the scenario from the opening: you have allowed GPTBot and ClaudeBot in robots.txt, added JSON-LD structured data to every pillar page, and written direct-answer blocks that any AI crawler can extract cleanly. Publishing llms.txt is the next logical step, a ten-minute file that signals your editorial priorities to any AI system prepared to read it. It is not a citation guarantee, and it is not what is llms.txt and why does it matter for seo in terms of confirmed ranking impact. It is a well-formed bet on a format that costs nothing to place. For a deeper look at what AI tools actually automate in a technical SEO workflow, read what AI simplifies in technical SEO work. If you want a practitioner to handle AI crawler access, structured data, and citation engineering for your site, see the AI SEO automation services.